8:30AM – Arrive at work

Hopefully, I arrive at work just in time for the first tea round – makes for a much better start to my day!

This time is usually used for catching up on emails and picking up where I left off yesterday. It’s useful to remind myself where I got to and try to achieve what I was in the middle of before stand up.

9:50AM – Stand up

This time is used to feedback to the team on my progress yesterday and what I intend to do today.

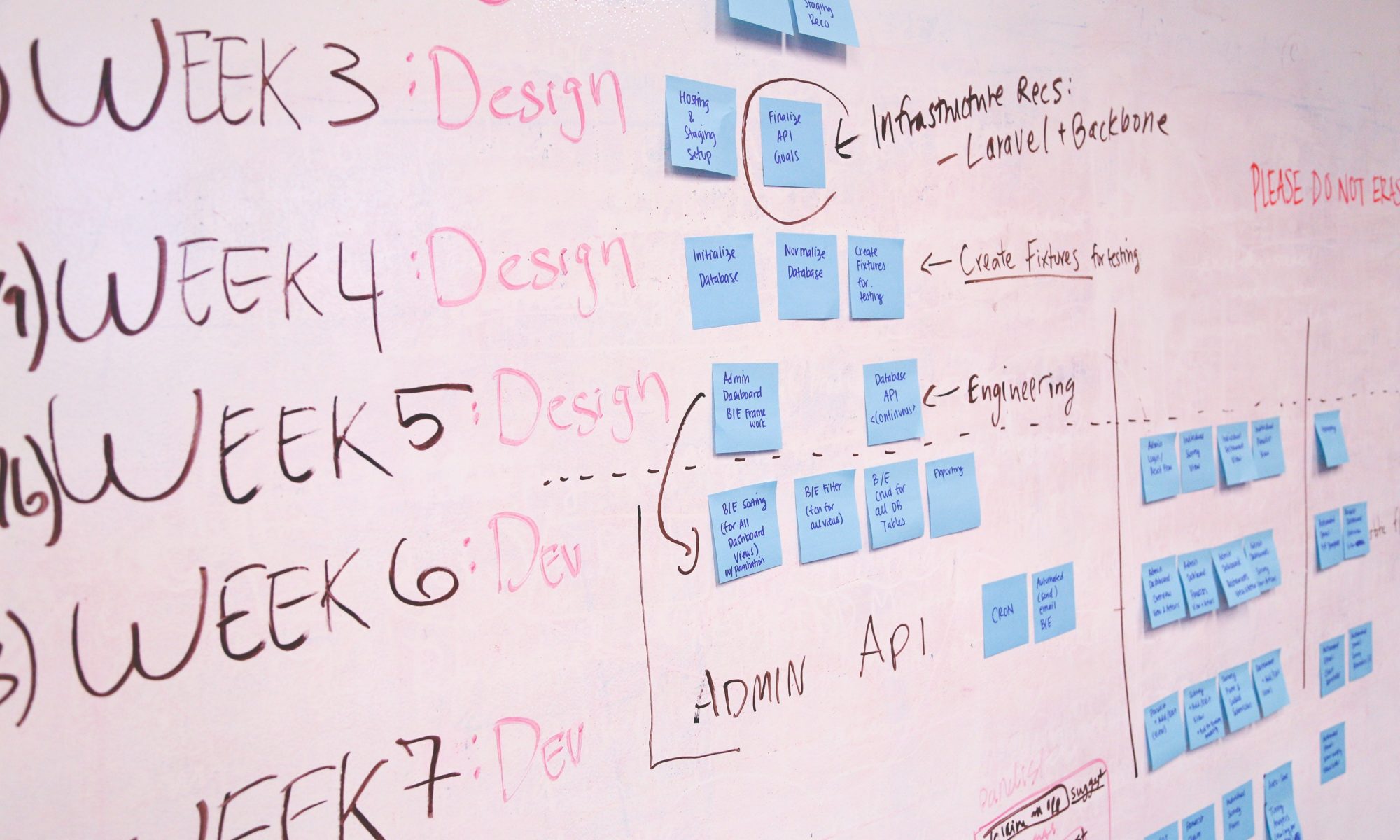

Now is a good time to pose any questions I have and to mention anything that may be blocking my work. For this stand up, it’s also time to look at the Kanban board for our project. This is something new our team is trying out, to see if we can get a better sight of what is being achieved during this project compared to other projects in the past. It is not without its pains but the general feeling is that it has been worthwhile to try out.

10:00AM – After stand up discussions

Since we’ve already been interrupted by stand up, now is the perfect time to discuss anything we have preying on our minds.

This can be anything from a simple user journey question to working together to overcome a problem. Today that means bringing our new team member up to speed with the project and what outstanding tasks I would like them to do first. I’m working on the front end of this project and would like a call for historical data to be completed so that I can progress further with my screen.

10:15AM – Time to get buried in code

Hoping for no more interruptions until lunch – it’s time for me to get knee deep in code.

Usually this involves working with legacy code and that is part of the battle! However, I am in the very fortunate position that this project is very greenfield. Whilst we are adding features to already existing functionality, our team have taken this opportunity to start fresh. A lot of the old code was done to an older standard, older ideas about the project and with older technologies in play. Now we can bring in newer technologies and our new way of doing things.

For instance, we are using Durandal for the new set of screens we are creating. Not only do we want this to feel like a one page application, but using Durandal helps us to modularise our front end code much better. All in all, this code will be much cleaner to work with in the future, and starting from scratch will benefit us greatly (plus it makes my job so much easier!).

12:30PM – Lunch

Time to get out of the office and clear my head.

Well sort of… Trips to lunch can easily turn into further discussion about problems we are facing in the project and how we might overcome them. It’s not a bad thing; it just shows how challenging the work can be and naturally you’ll want time to mull it over with someone.

1:30PM – Getting back to it

Now it’s time to get back to programming.

Today, I’m trying to not fiddle too much with the UI side of things. Instead, I want to get enough functionality into the screen so that I can complete at least one user journey. I’m working on what we’re calling a set up screen, which allows the user to set goals for their stores. This is by far the hardest screen in the project because there is just so much functionality, different possibilities and user journeys to consider. Now and then, I will come across a problem or a scenario that I want clarifying, but for the most part I am able to just get on with it.

Once I am satisfied that my JavaScript is working as it should, I try out a few quick scenarios. It doesn’t take me long to find an issue – unsurprising at this stage of development. This is the first time I will have called some of the controllers. In this instance it is on creation of a certain type of goal that’s brought up an error, so it’s time to take a look at the server side to sort out the problem.

5:15PM – Home Time

By the end of the day, I would have hoped to have got a big part of functionality done.

You can’t necessarily complete a Kanban task or sub task in a day, so I always try to give myself an aim for where I want to be with that task at the end of a day. While I try to leave my work at a good point to pick up for the next day, that doesn’t happen often.

Usually the time will honestly fly by and I am only reminded by others leaving that it is time for me to go.